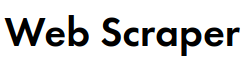

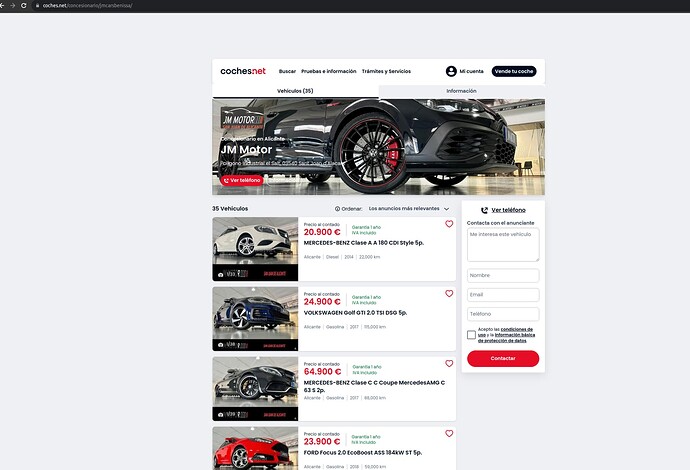

Hello I've the following sitemap for scrapping some links from Coches.net which its a website which has some protection against scrapping tools:

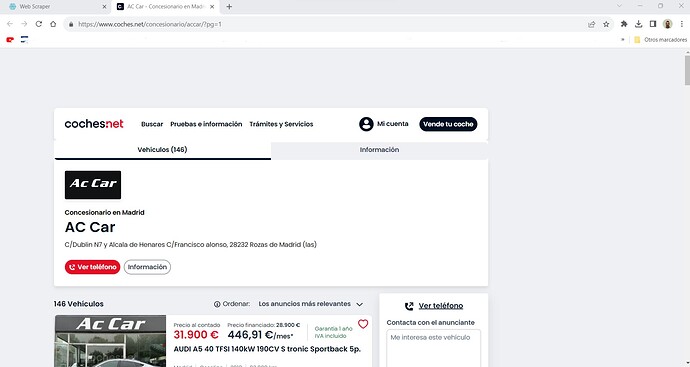

{"_id":"Cochesnet+30","startUrl":["https://www.coches.net/concesionario/jmcarsbenissa/"],"selectors":[{"id":"PaginationElement","parentSelectors":["_root"],"type":"SelectorElementClick","clickElementSelector":"li:nth-of-type(n+1) a.sui-AtomButton","clickElementUniquenessType":"uniqueText","clickType":"clickMore","delay":2000,"discardInitialElements":"discard-when-click-element-exists","multiple":true,"selector":"div.mt-AdvertisingRoadblock"},{"id":"Scrolling","parentSelectors":["PaginationElement"],"type":"SelectorElementScroll","selector":"div.mt-LayoutApp","multiple":true,"delay":100,"elementLimit":500},{"id":"Price","parentSelectors":["PaginationElement"],"type":"SelectorText","selector":"div.mt-CardAdPrice","multiple":true,"regex":""}]}

The main problem with this sitemap and not happens with all is that when the first link has some "pagination like 1-2-3-4, the script start doing from PG1 up to page 2 then goes to "null" and for a strange reason i don't know when its in "null" also extracts duplicated info, meaning that with this sitemap I should have only 35 data instead of 40 which is giving me.

Anyone knows? I've tried to change few things and now I'm getting 40 instead of 60 when there is only 35 data to extract.

Help would be appreciate!

Thanks a lot!